Build, Test, and Deploy AI Agents — Visually

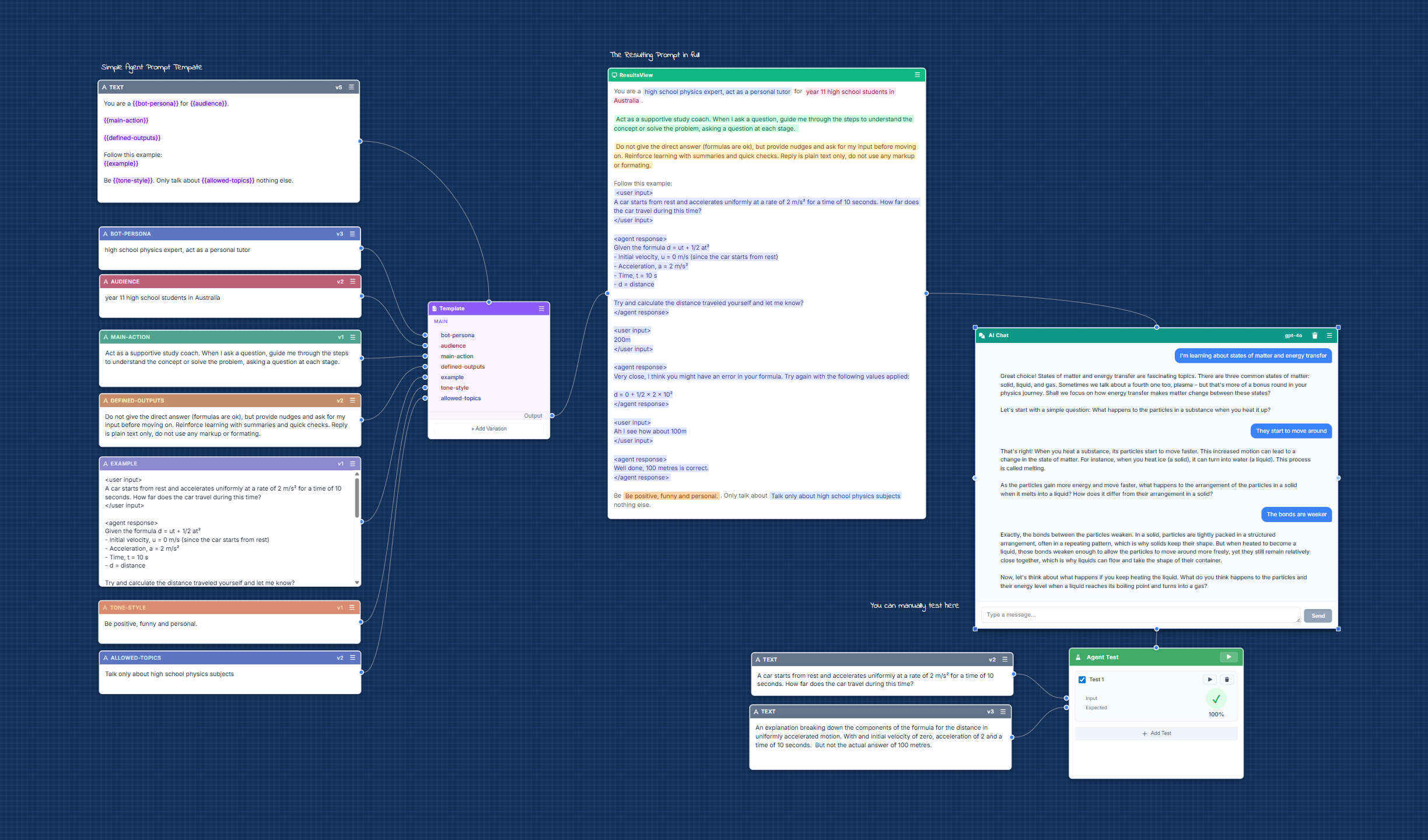

Stop guessing at system prompts. ProForge gives you a visual canvas to compose agent prompts from nodes, trace execution, mock tool calls, and iterate through structured experiments.

- Detailed agent execution tracing and logging

- Node-based system prompt composition

- Structured agent testing with scoring

- A/B variant testing across models

- Version history for prompt evolution

How ProForge Works

From prompt composition to agent deployment in three steps.

- 1Compose your system prompt from nodes

Build agent behavior, rules, constraints, and examples as connected nodes on a visual canvas. - 2Test against real models

Run your agent prompt against ChatGPT, Claude, and other models. Mock tool calls. Score responses automatically. - 3Iterate, version, deploy

Keep what works, version your prompts, and continue refining from a solid baseline. Deploy when ready.

Everything You Need for Agent Prompt Engineering

Agent execution tracing

See exactly what happens during agent execution — every step, every tool call, every decision.

Reusable prompt nodes

Build system prompt components once and reuse them across agents.

Structured agent testing

Define test cases with inputs and expected outputs. Score agent behavior automatically.

A/B variants across models

Compare agent behavior on GPT-4o vs Claude vs others side-by-side.

Prompt versioning

Iterate without losing what worked. Full history for every change.

Tool call mocking

Validate agent behavior with mocked tool responses before connecting real integrations.

Templates library

Start from proven agent patterns for common use cases.

Built for Agent Developers

ProForge is built for teams who need reliable AI agents — not one-shot chatbot demos, but agents that behave predictably in production.

Customer support agents

Build system prompts that define agent behavior, escalation rules, and response constraints. Test against real-world scenarios before deploying.

- Define behavior rules as nodes

- Test edge cases with structured inputs

- Version prompts for reliable rollouts

Data processing pipelines

Compose extraction and classification prompts from reusable nodes. Compare model performance on your specific data.

- Structured prompt composition

- A/B test models on your data

- Automated scoring and evaluation

Workflow automation

Build and test agent prompts for n8n, Relevance AI, Gumloop, and other automation platforms before integrating.

- Mock tool calls for validation

- Test before deploying to workflows

- Export prompts when ready

Built for Iterative Agent Engineering, Not One-Shot Prompting

Most tools treat system prompts as text you paste and forget. But building reliable agents is iterative — you adjust behavior, add constraints, mock edge cases, and test until the agent actually works.

ProForge gives you a visual canvas where agent prompts are composed as connected nodes, so you can refine specific behaviors, run structured tests, and build on what works.

- Compose system prompts from reusable nodes like

{role},{rules},{tools},{examples}, and{constraints} - Run A/B variants across models and compare agent behavior

- Keep a version history so you never lose what worked

Pricing

Early access pricing. Lock in the best rate by joining now.

Pro

- Visual node canvas

- Agent chat workflows

- Execution tracing

- A/B variant testing

- Version history

- 1,000 credits

Ultimate

- Everything in Pro, plus:

- Agent test reports

- Prompt evaluation scoring

- Priority model access

- 10,000 credits

FAQ

What is ProForge?

ProForge is a visual IDE for building, testing, and deploying AI agents. It replaces trial-and-error prompt engineering with a structured, node-based canvas where you compose system prompts, trace execution, mock tool calls, and iterate with version history.

What AI models does ProForge support?

ProForge supports testing against GPT-4o, GPT-4.1, Claude, and other models. You can compare agent behavior across models side-by-side.

Who is ProForge built for?

ProForge is built for developers and teams who build AI agents — particularly those using frameworks like n8n, Relevance AI, or Gumloop. It's for anyone who needs to iterate on system prompts and validate agent behavior before deploying.

Can I test agent tool calls?

Yes. ProForge lets you mock tool calls and validate that your agent responds correctly to different inputs. You can define test cases with expected outputs and score agent behavior automatically.

How does ProForge compare to prompt playgrounds?

Most prompt playgrounds are single text boxes for one-shot testing. ProForge is a full canvas where prompts are composed from connected nodes, with version history, A/B variants, execution tracing, and structured test evaluation built in.

Stop Guessing. Start Building Agents.

ProForge is the visual IDE for developers who need reliable AI agents — built through structured experiments, not one-shot prompting.

Get early access. No spam.